Guarding Your AI Pipeline: A Practical Guide to Data Quality for ML, Generative AI, and Autonomous Agents

Overview

No one intentionally builds a flawed model. Yet many AI projects stumble because of data that looked pristine—until it wasn't. A pricing model may ship with a $2.3 M margin shortfall, a chatbot delivers confident wrong answers, or an autonomous agent commits budget based on incomplete supplier data. Poor data quality is the most common reason AI initiatives stall, drift, or fail silently in production.

In traditional machine learning (ML), failures are at least visible: a dashboard shows an off number, an analyst catches it, someone retrains the model. The damage is contained. But generative AI and agentic AI break that containment. A chatbot pulling from a stale knowledge base produces a fluent, incorrect answer with no warning. An autonomous procurement agent acts on incomplete data before anyone reviews it. As AI shifts from prediction to action, tolerance for data quality failures shrinks—and the ability to catch them before harm occurs becomes much harder.

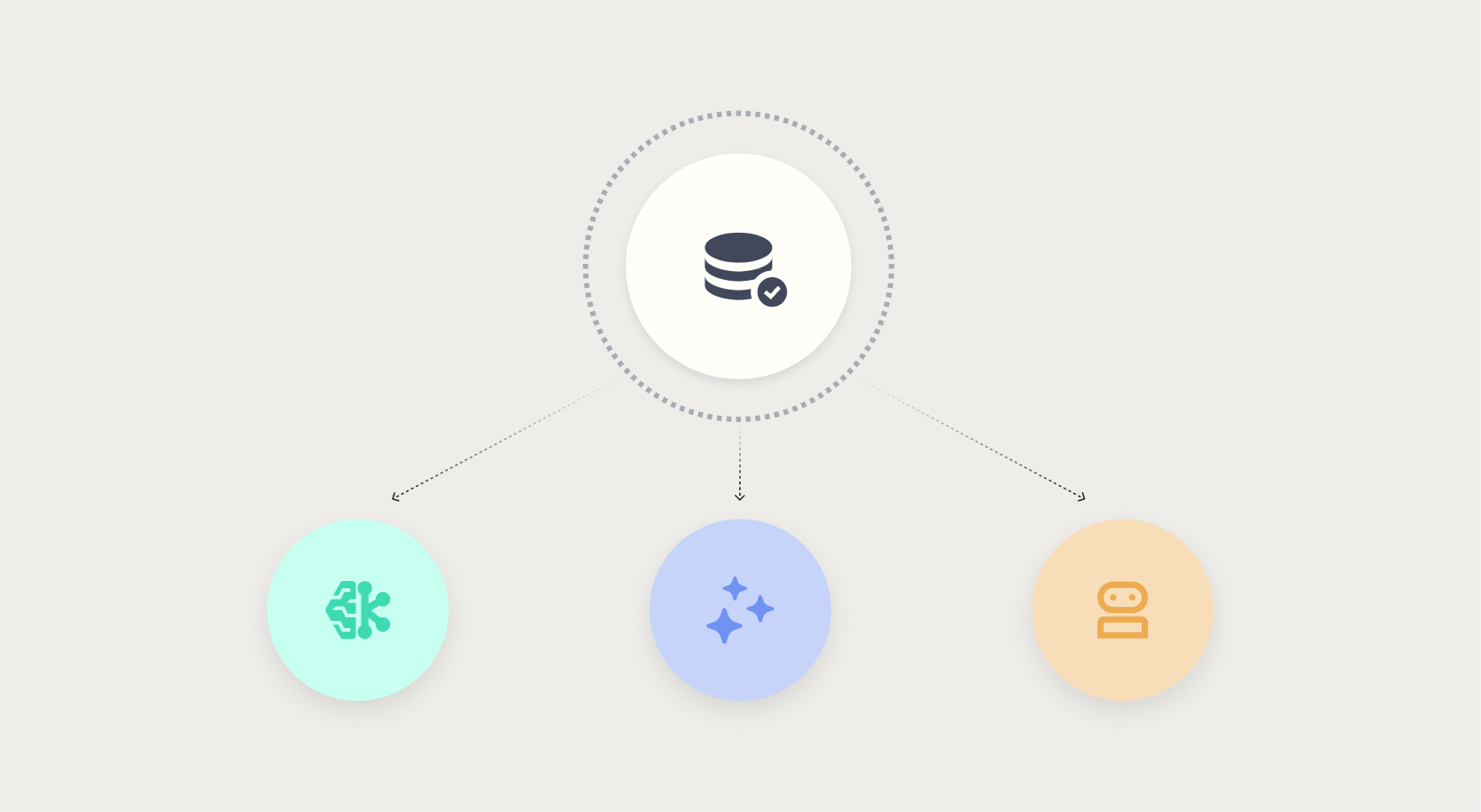

This tutorial provides a structured approach to maintaining data quality across three AI paradigms: traditional ML, generative AI, and agentic AI. You'll learn how to diagnose, prevent, and respond to quality issues at each stage of your AI pipeline.

Prerequisites

Data Understanding

- Basic familiarity with data profiling concepts (nulls, outliers, distributions).

- Access to sample datasets from your organization or public benchmarks (e.g., UCI, Kaggle).

- For generative AI: familiarity with vector databases, embeddings, and retrieval-augmented generation (RAG).

- For agentic AI: understanding of autonomous decision loops and action validation.

Tooling

- Python 3.8+ with libraries: pandas, great_expectations (for validation), langchain or llamaindex (for RAG), and simple API clients.

- A data quality framework (e.g., Great Expectations, Deequ, or custom validation functions).

- Optional: a logging/monitoring system (e.g., MLflow, DataDog, or custom dashboards).

Step-by-Step Instructions

Step 1: Establish Data Quality Baselines for Traditional ML

Start with the classic ML pipeline. Define what “clean enough” means for your use case. Common dimensions: completeness, uniqueness, accuracy, consistency, and timeliness.

- Profile your data. Use

df.describe()or pandas-profiling to surface missing values, outliers, and unexpected distributions. - Write validation rules. For example, in Great Expectations:

expect_column_values_to_not_be_null("price") expect_column_values_to_be_between("price", 0, 1000000) expect_table_row_count_to_be_between(1000, 20000) - Automate checks. Schedule these rules to run before every training job. Fail fast if data doesn't meet thresholds.

- Track drift. Compare new batches against the baseline using statistical tests (Kolmogorov–Smirnov for continuous features, chi‑squared for categorical).

Step 2: Extend Quality Checks for Generative AI

Generative models don't just rely on training data; they depend on retrieval contexts. RAG pipelines are especially vulnerable to stale or contradictory sources.

- Validate knowledge sources. Ensure documents in the vector database are up to date and free from factual errors. Implement freshness checks:

{ "source": "knowledge_base", "max_age_days": 30, "minimum_documents": 100 } - Embedding quality. Test that embeddings capture meaning correctly. For example, run a small set of queries and manually verify top‑k retrievals.

- Response grounding. Use an LLM to evaluate whether each output is explicitly supported by the retrieved chunks. Flag ungrounded claims.

- Hallucination detection. Implement a separate classifier (or another LLM call) to score confidence of each fact in the response.

Step 3: Implement Guardrails for Agentic AI

Autonomous agents make decisions in real time. Data quality failures here can cause irreversible actions (e.g., purchases, deletions).

- Define critical data fields. For a procurement agent, these might be

supplier_id,price_per_unit,inventory_level. - Set hard thresholds. If any critical field is null, out of range, or stale, halt the agent's action and escalate.

def pre_action_check(context): if context.price is None or context.price <= 0: raise DataQualityError("Invalid price") if context.supplier.rating < 3: raise DataQualityError("Low supplier rating") - Log every decision. Record the data used, the action taken, and any validation results. This creates an audit trail for post‑mortems.

- Implement a human‑in‑the‑loop gate. For high‑stakes decisions, require manual approval when data quality scores fall below a threshold.

Step 4: Monitor Continuously and React

Data quality is not a one‑time fix. Production data evolves, as do model behaviors.

- Set up dashboards. Track metrics like percentage of nulls, distribution shifts, retrieval accuracy, and agent failure rates.

- Alert on anomalies. Use simple threshold alerts or more sophisticated anomaly detection (e.g., Isolation Forest on collected quality metrics).

- Create a feedback loop. When a bad output is caught, trace it back to the root data cause. Update validation rules accordingly.

- Schedule regular retraining or re‑indexing of knowledge bases. This prevents drift from accumulating.

Common Mistakes

- Treating data quality as a one‑time project. It requires ongoing investment, especially as data sources change.

- Ignoring data lineage. Without knowing where a data point came from, you can't fix it when something goes wrong.

- Overlooking generative AI's reliance on retrieval context. Even a perfect LLM produces garbage if its retrieval is based on stale or conflicting data.

- Not simulating failure modes. Test agents with deliberately bad data (null values, contradictory info) to see how they behave before production.

- Assuming agentic AI systems will fail gracefully. Many are designed to proceed unless explicitly stopped. Build proactive stops.

Summary

Data quality is the silent killer of AI initiatives—especially as models move from prediction to autonomous action. By establishing baselines for traditional ML, extending checks for generative AI (RAG, hallucination), and implementing guardrails for agentic AI, you can catch failures before they cause damage. Continuous monitoring and a feedback loop turn quality from a gate into a continuous discipline. Remember: the AI operated as designed; it was the data that was never fit for purpose.